Recent Projects

Automating Reproducibility Checks for Journal Submissions

Inspired by conversations at the IGDORE Reproducibilitea journal club, this project aims to automate the simpler aspects of the reproducibility check process for code submitted alongside a journal article. I developed a script that parses a codebase and notifies users of the following barriers to reproducibility:

- Files that are referenced in the code but are missing from the codebase

- Absolute paths to files that are machine-specific (e.g. “C:/Users/Desktop/Data/data.csv”)

- Paths which are not resolvable (the locations don’t exist in the database) are caught

- Paths which are resolvable (the locations do exist) are replaced with relative paths (e.g. “../Data/data.csv”)

This project is still in early development. Try scanning a single file below (requires javascript to be enabled):

Detecting Post-Retraction Citation Awareness

This project used natural language processing of the fulltext of openly accessible research works to discern whether an article that cites a retracted article did so knowingly (acknowledging the retraction) or not. I began the project with the intention of providing data to inform the discussion in metascience about whether and how damaging post-retraction citations really are.

Prior attempts at classifying post-retraction intent used rule-based heuristics (e.g. “Does the citing sentence include the word retracted in it? Does the paper also cite a retraction notice?”). The semi-manually annotated datasets that came from this prior work allowed me to instead take a machine learning approach that made no prior assumptions of which textual or metadata features would be meaningful.

Hijacked Journal Detection Toolbox

Inspired by Anna Abalkina’s reporting on the hijacking of the Russian Law Journal, I used Crossref’s API to investigate how these paper mill articles were able to receive valid DOIs, and discovered a new method fraudsters are using to spoof the identity of journals they are hijacking. Rather than just buying the web-domain or typosquatting a similar web domain, they are able to register DOIs under the same journal title in 3rd party DOI provider databases like Crossref.

Loading...

In the process of investigating these hijackings, I found myself using a set of API calls and scripts often, and I am developing them into a browser extension to help other sleuths quickly check for signs of a journal hijacking. Here are some common API calls:

Type a journal title search query to search for duplicate journal titles (can be a sign of a hijacking) registered in Crossref’s database. The tool will show only up to the first 30 duplicate listings (double click submit to try a different query. May take a while to load). If your query is overly broad (“Science”, e.g.) it’s possible it will fail to detect due to technical reasons4

Loading...

Loading...this can sometimes take up to 10 seconds or more

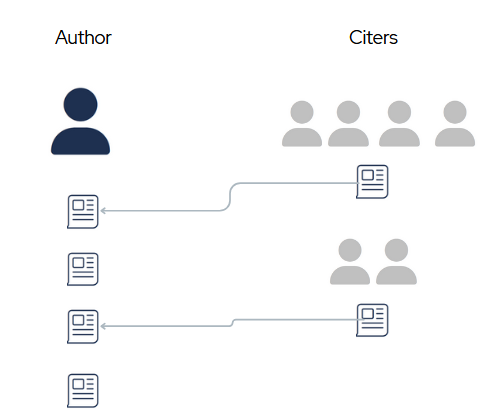

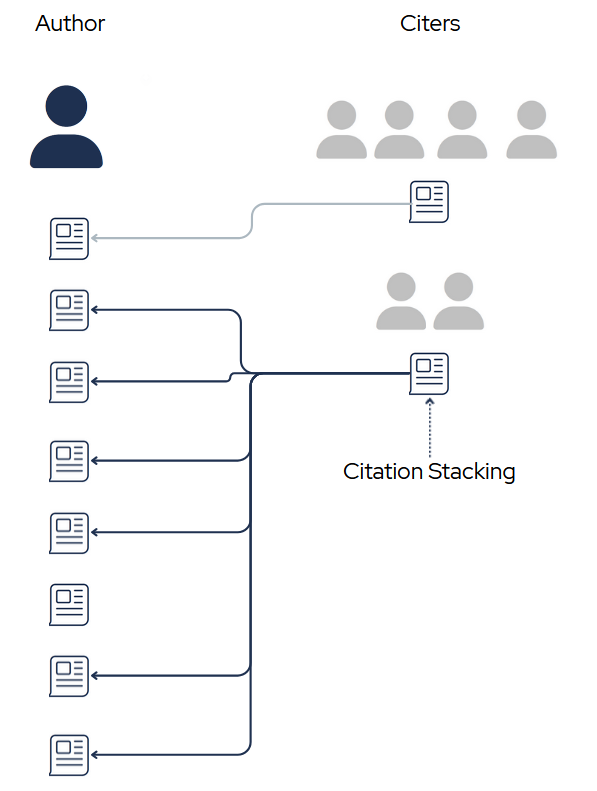

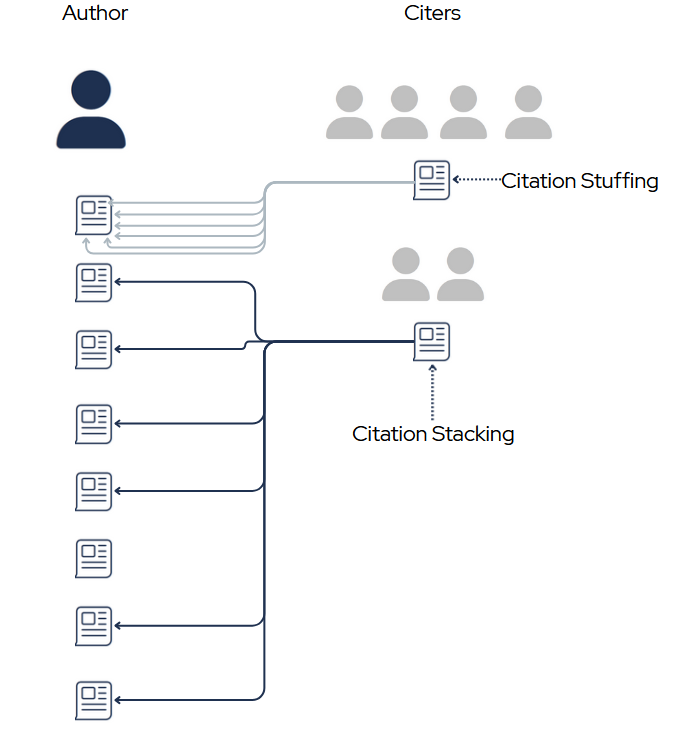

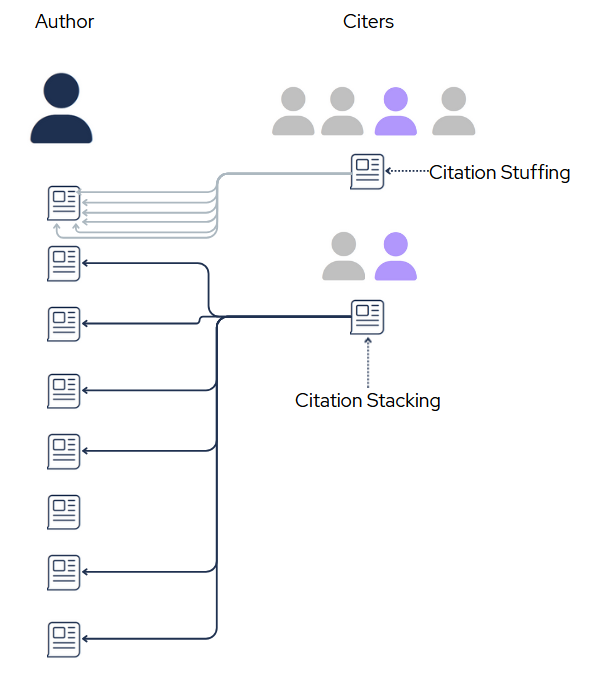

Discovering Unreasonable Citation Stacking

I’m developing an algorithm to scan the scientific literature and identify authors that have an unusually high number of papers with signs of citation stacking (the presence of many citations to a single author (or group of authors) that are not the result of honest scientific work but rather the result of gaming citation metrics). I’m planning to turn this into a package that is inter-operable with many types of data sources (Scopus, Crossref, OpenAlex) for others to use. Inspired by El País’s reporting on a case of extreme self-citation stacking among an esteemed computer scientist.

Here are a few easily detectable ways in which the standard citing pattern can be manipulated:

Predicting Paper Acceptance Status from Peer Reviews

I used Computer Science conference paper peer reviews to train a ML model to predict whether the paper was accepted or rejected. If done well, algorithms like these can lighten the load of editors’ jobs by helping them synthesize or make decisions on acceptance/rejection altogether (though much improvement must be made before that responsibility can be safely passed). First attempts had poorer performance than I had hoped for, so I am currently revamping with a more sophisticated feature representation that will hopefully capture more nuance.

Older Projects

Detecting and Denoising EEG Artifacts

Replicating the results of Li et al. 2023. Created semi-simulated noisy EEG data using an open dataset of common EEG artifacts to train a segmentation neural network to detect where artifacts occur. Although an adjustment needed to be made to match the codebase with the presented methods in the paper, the accuracy of the artifact detection network largely replicated. Approaches like these are promising, as artifact removal is both a highly time-consuming and bias-injecting step in the standard preprocessing pipeline of EEG experiments, however I seriously question the generalizability of this group’s model due to major flaws in the assumptions behind the ‘clean’ EEG dataset used in training.

Automatically Detecting Sleep Spindles on EEG

Replicating the best performing algorithm from Lacourse et al. 2018 and comparing to a replicated version of Lustenberger et al. 2016. Adding fuzzy logic to the sliding window reduce false negatives on by-event analysis due to singular sample outliers.

Cognitive Task Anti-Cheating

Spinoff analysis that came from working on a working memory cognitive task experiment where participants were instructed to attend to the center of their visual field and were prompted to recall stimuli according to, among other things, which visual hemifield the stimuli were presented in. Explored the feasibility of detecting a bias in performance on one side that would suggest participants are not attending to the center of their visual field, but rather ‘cheating’ to one side to improve their odds by minimizing the amount of information they must hold in their working memory.

Footnotes

A previous version of this site reported higher model performance; while revisting the code in preparation for presenting at Metascience 2025, I noticed some rows in the annotated dataset I used for training were duplicated, and the resulting performance post-cleaning is reflected here now.↩︎

Training was done in a potentially over-conservative method: 70/30 train test split with 10-fold CV on the train set, the best performing model during CV being applied to the test set. With such a small training set to begin with, this approach may have lead to underfitting despite using an approach well suited to small datasets (logistic regression). When revamping this project for publication I hope to do a targeted analysis of under/overfitting throughout training with a larger dataset.↩︎

Here the retracted citation is [43], and the word “decreased” is not being used in reference to the citation, but its presence indicates a routine description of scientific findings, the kind of routine description where retracted works often were unacknowledged.↩︎

Currently I am not using cursors as I expected users to have a particular journal in mind. If none of the first 30 results for the query match exactly, it will not (as of 4/8/25) search the next page of results. This can be easily fixed when I get around to it.↩︎